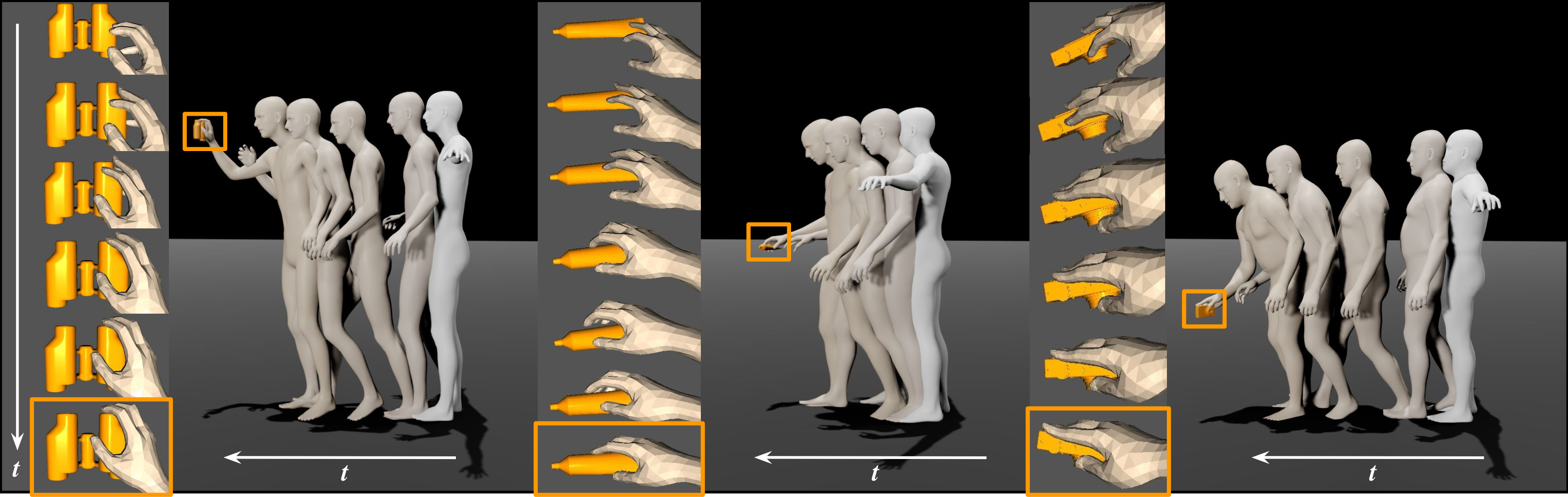

Overview. Whole-body grasping motion sequences (in beige) generated by SAGA starting from a given pose (in white) to approach and grasp randomly placed unseen objects. For each sample, we present the hand and finger motions in the last few frames on the left column.

Abstract

The synthesis of human grasping has numerous applications including AR/VR, video games and robotics. While methods have been proposed to generate realistic hand-object interaction for object grasping and manipulation, these typically only consider interacting hand alone. Our goal is to synthesize whole-body grasping motions. Starting from an arbitrary initial pose, we aim to generate diverse and natural whole-body human motions to approach and grasp a target object in 3D space. This task is challenging as it requires modeling both whole-body dynamics and dexterous finger movements. To this end, we propose SAGA (StochAstic whole-body Grasping with contAct), a framework which consists of two key components: (a) Static whole-body grasping pose generation. Specifically, we propose a multi-task generative model, to jointly learn static whole-body grasping poses and human-object contacts. (b) Grasping motion infilling. Given an initial pose and the generated whole-body grasping pose as the start and end of the motion respectively, we design a novel contact-aware generative motion infilling module to generate a diverse set of grasp-oriented motions. We demonstrate the effectiveness of our method, which is a novel generative framework to synthesize realistic and expressive whole-body motions that approach and grasp randomly placed unseen objects.

Video

Citation

SAGA: Stochastic Whole-Body Grasping with Contact

Yan Wu*, Jiahao Wang*, Yan Zhang, Siwei Zhang, Otmar Hilliges, Fisher Yu, Siyu Tang

@inproceedings{wu2022saga,

title = {SAGA: Stochastic Whole-Body Grasping with Contact},

author = {Wu, Yan and Wang, Jiahao and Zhang, Yan and Zhang, Siwei and Hilliges, Otmar and Yu, Fisher and Tang, Siyu},

booktitle = {Proceedings of the European Conference on Computer Vision (ECCV)},

year = {2022}

}

Team

|

|

|

|

|

|

|

|---|---|---|---|---|---|---|

| Yan Wu | Jiahao Wang | Yan Zhang | Siwei Zhang | Otmar Hilliges | Fisher Yu | Siyu Tang |

Contact

For questions, please contact

Yan Wu: wuyan@student.ethz.ch

Jiahao Wang: jiwang@mpi-inf.mpg.de